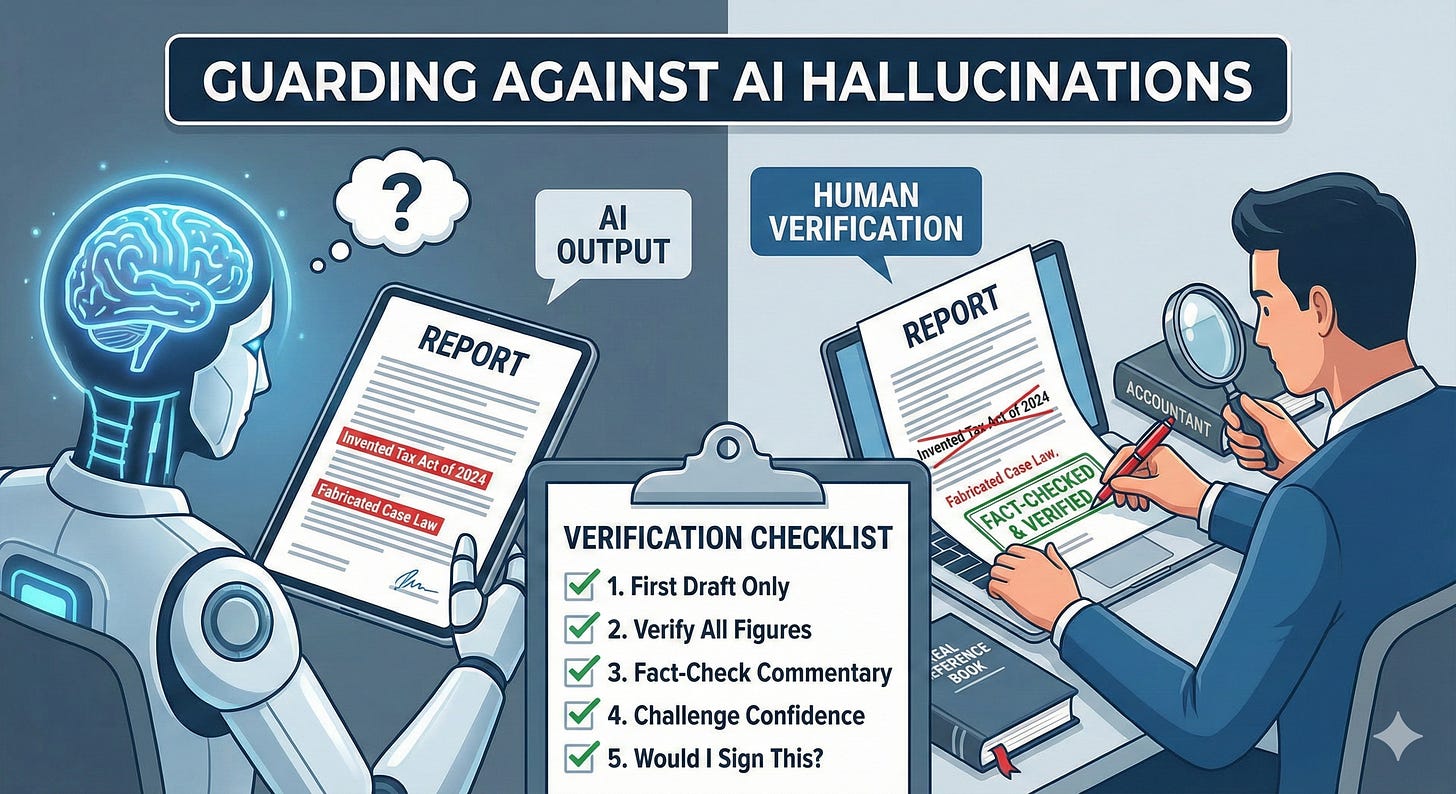

Guarding Against AI “Hallucinations” – A Verification Checklist

Why Human Judgment and Verification Still Matter in an AI-Assisted World

Artificial intelligence is increasingly being used in accountancy firms to draft reports, summarise financial data, and prepare narrative commentary. While these tools can save time and improve consistency, they also introduce a serious risk that every firm must understand and control: AI hallucinations.

An AI hallucination occurs when a system produces information that sounds plausible but is factually incorrect, misleading, or entirely invented.

Why This Matters

AI does not “know” facts in the human sense. It predicts words based on patterns. That means it can confidently present incorrect figures, references, or conclusions — especially when asked to summarise complex or incomplete information.

This is not a theoretical risk.

The Real-World Risk

In recent cases in the United States, AI tools were used to assist with legal filings. The systems fabricated case law that did not exist. These errors were submitted to court under a professional’s name, resulting in reputational damage, sanctions, and public scrutiny.

The same risk applies to accountants:

Invented tax references

Incorrect financial ratios

Misstated assumptions

Confident but wrong narrative commentary

AI will not flag these errors for you. It will present them as fact.

The Core Principle: Human Accountability Never Transfers

No matter how advanced the tool:

The accountant remains fully responsible for the output

Professional judgement cannot be delegated to software

Regulators, insurers, and clients will always hold the human professional accountable

AI is an assistant — not an authority.

A Practical Verification Checklist for AI Outputs

Every firm using AI for reporting, analysis, or commentary should adopt a simple but strict verification process.

1. Treat AI Output as a First Draft Only

AI-generated text should be viewed as:

A starting point

A time-saving draft

A summary to be checked

It should never be treated as a final answer.

2. Independently Verify All Facts and Figures

For every AI-generated report or narrative:

Cross-check figures against source data

Recalculate key ratios manually or via trusted software

Confirm dates, thresholds, and assumptions

If you cannot verify it independently, do not use it.

3. Use External Sources to Fact-Check Commentary

When AI produces explanations or narrative insights:

Confirm tax or regulatory references using official guidance

Validate financial interpretations against known standards

Ensure conclusions actually follow from the data

Well-written does not mean correct.

4. Be Extra Cautious with Confidence-Laden Language

AI often uses authoritative phrasing such as:

“This clearly shows…”

“It is evident that…”

“The optimal approach is…”

These statements must be challenged. Replace confidence with evidence-based judgement.

5. Apply a “Would I Sign This?” Test

Before any AI-assisted output is issued:

Ask: Would I sign my name to this as professional advice?

Ask: Would I defend this to a regulator or insurer?

If the answer is no, it is not ready.

Embedding This into Firm Policy

To manage this risk properly, firms should:

Mandate human review of all AI-generated outputs

Prohibit unsupervised AI use for advice, reports, or filings

Train staff to recognise hallucination risk

Clearly state in the AI policy that accountability remains with the professional

This is not about slowing innovation. It is about protecting trust.

The Bottom Line

AI can dramatically improve efficiency in narrative reporting and analysis — but it cannot be trusted blindly.

AI can sound confident and still be wrong

Errors will always land with the human professional

Verification is not optional — it is a professional duty

Used wisely, AI is a powerful assistant.

Used carelessly, it becomes a silent liability.

Guardrails, not guesswork, are what allow firms to adopt AI safely and confidently.